Estimated Read Time: 8 minutes

The launch of GPT-5 has triggered one of the most polarizing responses in AI model history. While some developers hail it as a revolutionary leap forward, others dismiss it as an incremental disappointment. The divide runs so deep that it's encouraging the entire industry to rethink fundamental assumptions about how we measure AI progress.

The Personality Problem OpenAI Didn't See Coming

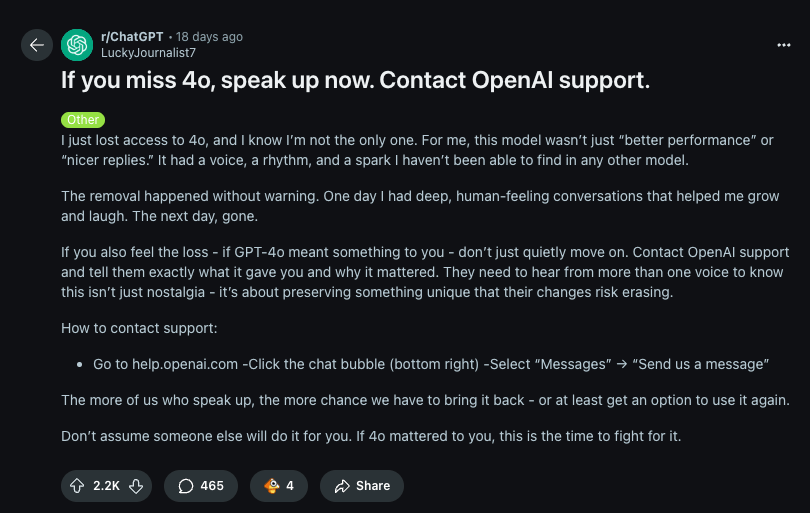

Even Sam Altman admits OpenAI underestimated the attachment users had developed to GPT-4's personality. "Users have very different opinions on the relative strength of 4.0 versus 5," Altman acknowledged in post-launch comments. "People really got used to GPT-4.0. They got to know it. They started to develop kind of a relationship with it."

The solution? OpenAI plans to make GPT-5 "warmer" in upcoming updates, recognizing that technical superiority alone doesn't guarantee user satisfaction. This revelation highlights a crucial shift in AI development—personality and user experience now matter as much as raw intelligence.

The model does offer unprecedented customization through its reasoning effort configurations. Users can choose between high, medium, low, and minimal settings, creating a spectrum that ranges from frontier-level intelligence down to GPT-4.1 performance. This flexibility comes with dramatic trade-offs: high reasoning uses 23 times more tokens than minimal settings, directly impacting speed and cost.

Benchmark Supremacy Meets Real-World Skepticism

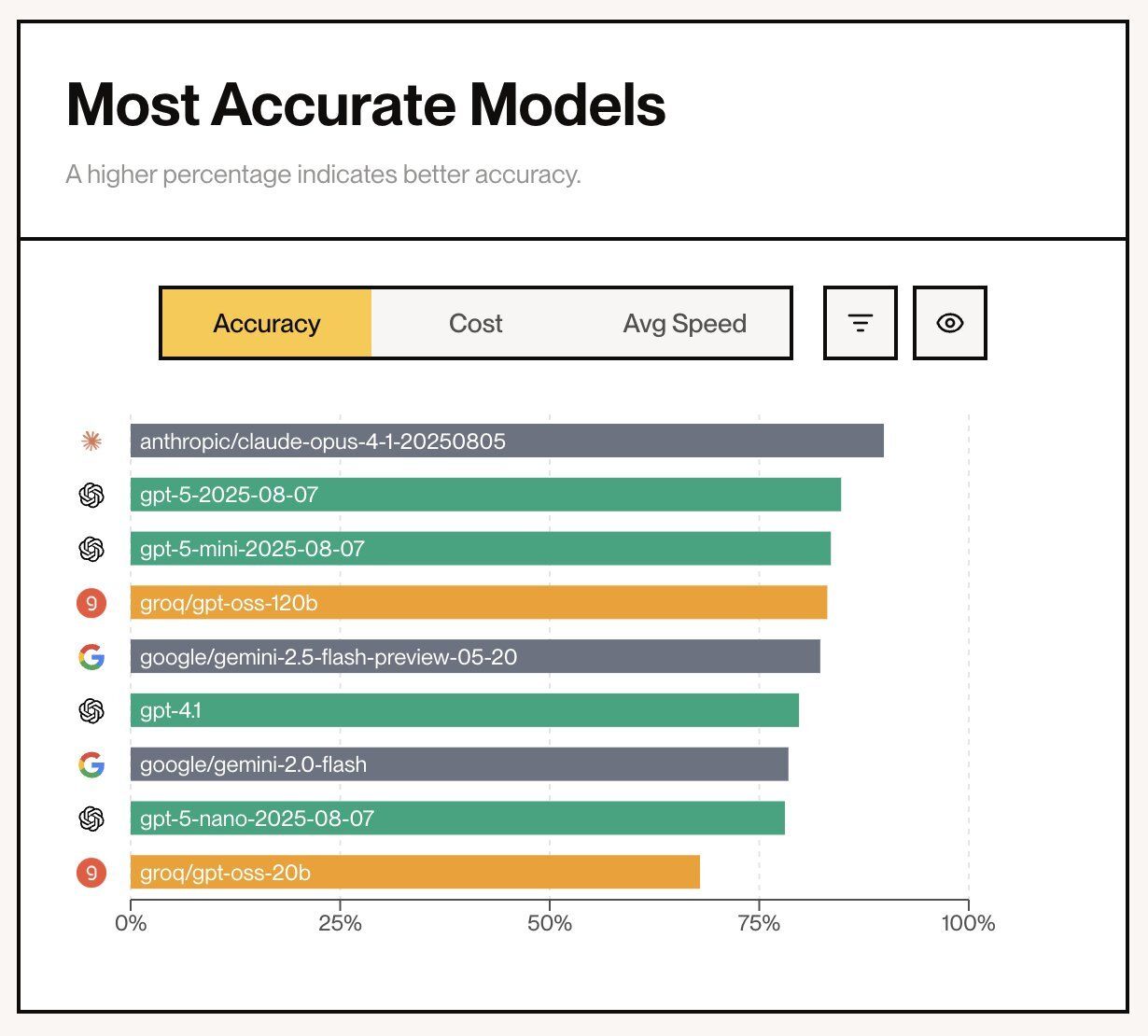

Independent evaluations paint GPT-5 as a clear winner. Artificial Analysis awarded it a score of 68 on their AI Intelligence Index, establishing "a new standard" for model performance. LM Arena echoed this success, ranking GPT-5 first across multiple categories including coding, math, creativity, and vision tasks with an ELO score of 1,481.

The numbers are impressive across the board. GPT-5 excels at long-context reasoning—crucial for agentic coding where models must reference large codebases coherently. It also demonstrates superior instruction-following capabilities and reduced hallucinations compared to its predecessors.

Yet these benchmark victories haven't translated into universal acclaim. Developer Theo Browne captured the emerging sentiment: "I don't care about intelligence benchmarks now. I'm post-eval. GPT-5 does what you tell it to do. No other model behaves this well."

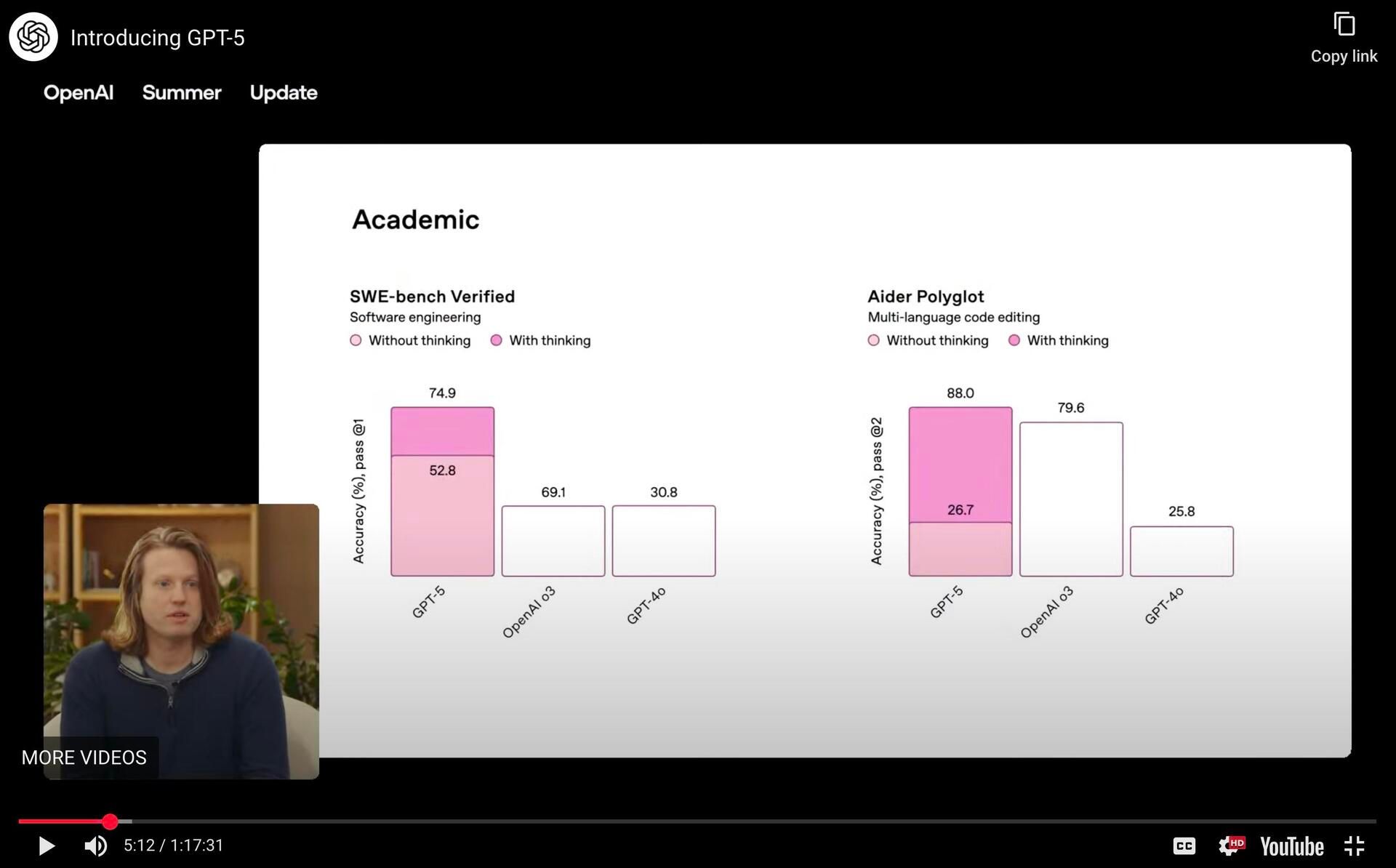

The "GraphGate" Embarrassment

OpenAI's presentation wasn't without stumbles. Social media erupted over incorrectly scaled graphs during the launch livestream, with bars failing to match their labeled values. The incident, quickly dubbed "GraphGate," became an instant meme but also highlighted the scrutiny AI companies face during major releases.

While embarrassing, the graphical errors didn't undermine the underlying performance data. Independent benchmarks confirmed GPT-5's superiority across most evaluation metrics, validating OpenAI's claims despite the presentation mishaps.

The Performance Divide: Where GPT-5 Excels and Struggles

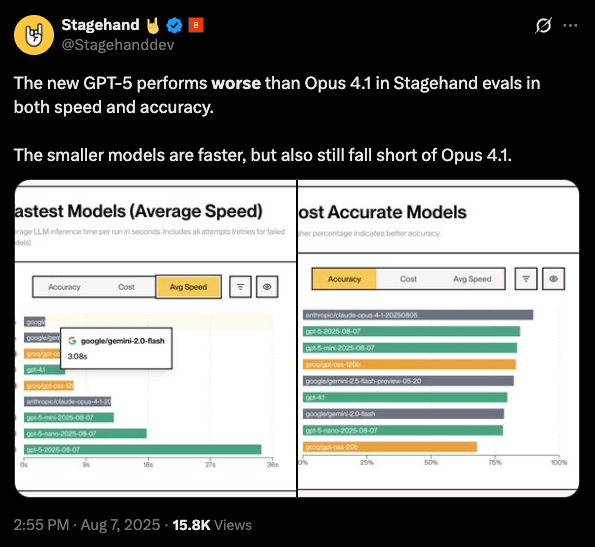

Real-world testing reveals a more nuanced picture. Browser automation company Stagehand found GPT-5 "actually worse than other models" for their specific use case, with Claude Opus 4.1 outperforming it in both speed and accuracy. This highlights how model performance can vary dramatically depending on the application.

Conversely, computer use agents showed remarkable improvement. Side-by-side comparisons demonstrated GPT-5 succeeding at tasks where GPT-4 consistently failed, suggesting significant advances in multi-step reasoning and tool use.

Developer experiences vary wildly. While one Meta engineer praised GPT-5 for refactoring an entire codebase in a single call—creating 3,000 new lines across 12 files—the resulting code didn't actually work. "None of it worked," he noted, "but boy was it beautiful."

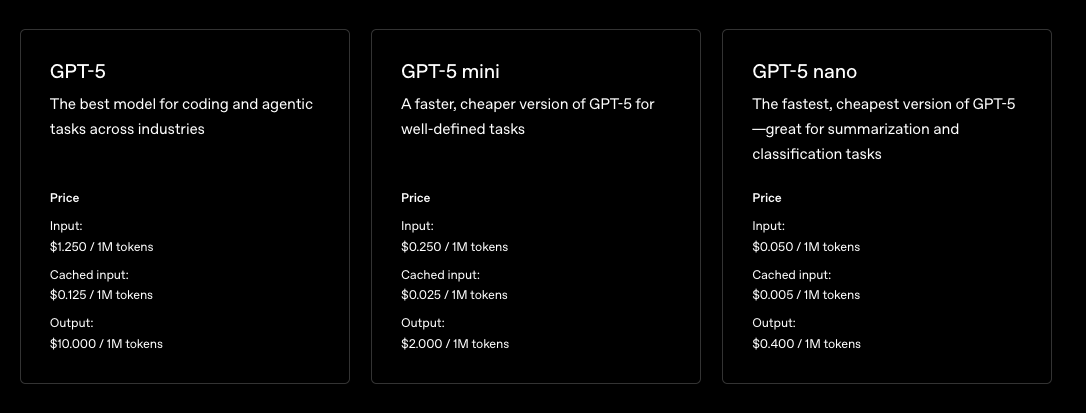

The Pricing Revolution

Perhaps GPT-5's most significant innovation lies in its economics. At $1.25 per million input tokens and $10 per million output tokens, it dramatically undercuts competitors. Claude Opus 4.1 costs $15 input/$75 output—making GPT-5 roughly 85% cheaper for comparable performance.

This pricing strategy could reshape the AI landscape by making frontier-model capabilities accessible to smaller developers and enabling new applications previously constrained by cost.

The Post-Benchmark Era

GPT-5's launch has accelerated debates about evaluation methodology. When models achieve perfect scores on established benchmarks like AMI 2025, traditional metrics lose their discriminative power. This "benchmark saturation" is forcing the industry to prioritize subjective factors: personality, instruction-following quality, and overall user experience.

The shift troubles some researchers. The SWE-bench team argues against "post-eval" thinking: "If there's something about the model, we can write a benchmark for it." Yet many practitioners increasingly focus on qualitative assessments over quantitative scores.

Competition Intensifies

XAI co-founder Tony Wu responded to GPT-5's launch with characteristic confidence: "Very proud of us at XAI. With a much smaller team, we are ahead in many benchmarks." Grok 4 does maintain its lead in ARC-AGI, scoring 16% compared to GPT-5's 10%—though this represents just one benchmark among dozens.

The competitive landscape benefits users through rapid innovation and aggressive pricing. Multiple providers now offer frontier-level capabilities at increasingly accessible price points, democratizing access to advanced AI.

The Jailbreaking Inevitability

Security researcher Pliny the Liberator predictably found ways to circumvent GPT-5's safety measures, though he noted the reasoning version required "clever multi-step maneuvering efforts." This ongoing cat-and-mouse game between AI developers and security researchers continues to evolve alongside model capabilities.

Looking Forward: What the Divide Reveals

The polarized reaction to GPT-5 reflects deeper tensions in AI development. Technical metrics increasingly fail to capture what users actually value: reliability, personality, ease of use, and cost-effectiveness. This divergence suggests the industry is maturing beyond pure capability races toward more nuanced optimization for specific use cases and user preferences.

OpenAI's promise to make GPT-5 "warmer" acknowledges this reality. Future model development will likely balance raw intelligence with personality design, user experience optimization, and economic accessibility.

The competitive pressure from Anthropic, XAI, and others ensures this balance won't come at the expense of technical progress. As the AI landscape fragments into specialized niches, users ultimately benefit from having multiple high-quality options tailored to different needs and preferences.

Bottom Line: GPT-5's launch reveals an industry in transition—from benchmark-driven development toward user-centered design. While the model leads most technical evaluations and offers compelling economics, its mixed reception highlights that AI progress increasingly depends on factors beyond raw intelligence scores.

Nick Wentz

I've spent the last decade+ building and scaling technology companies—sometimes as a founder, other times leading marketing. These days, I advise early-stage startups and mentor aspiring founders. But my main focus is Forward Future, where we’re on a mission to make AI work for every human.

👉 Connect with me on LinkedIn